Big Data–Based CNC Milling Quality Control for Real-Time Adaptive Manufacturing

Manufacturers widely use CNC milling machines in precision machining. However, traditional quality control methods rely heavily on experience and struggle to adapt to complex machining variations.

This study proposes a quality control approach based on big data analysis.

By implementing real-time data monitoring and adaptive parameter adjustment, it optimizes key quality indicators during the machining process.

Validated through experiments, this method aims to achieve more precise and stable prediction and control of machining quality, thereby providing technical support for intelligent manufacturing and precision machining.

Analysis of Machining Quality Issues

Core machining quality issues in CNC milling primarily include surface roughness, dimensional errors, and geometric deviations.

Surface roughness directly impacts product performance and aesthetics, making it a key focus of quality control.

Dimensional errors stem from machine tool accuracy, fluctuating cutting forces, and thermal deformation.

These factors often cause parts to fail tolerance requirements and compromise assembly and functional performance.

Geometric deviations typically manifest as irregular workpiece shapes, severely compromising assembly accuracy when severe.

Current traditional quality control methods struggle to address complex machining conditions and fluctuating quality requirements, often resulting in quality fluctuations and lacking flexibility.

Therefore, employing big data analytics to dynamically adjust process parameters based on real-time data has become the key to resolving machining quality issues.

Quality Control System Design

Before implementing the quality control system, it is essential to define the objectives and constraints of the machining process.

The system aims to monitor critical process parameters in real time, predict potential deviations, and adjust machining conditions dynamically to maintain target quality levels.

System Architecture Design

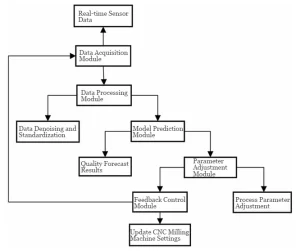

The system adopts a modular design comprising five major modules: data acquisition, data processing, model prediction, parameter tuning, and feedback control, as shown in Figure 1.

The data acquisition module captures key process parameters in real time and transmits them to the data processing module.

The data processing module performs noise reduction, normalization, and feature extraction on the data to provide inputs for the model.

The model prediction module utilizes machine learning algorithms to forecast quality, compares the results against target standards, and generates deviation information.

The parameter adjustment module optimizes process parameters through optimization algorithms.

The feedback control module automatically updates CNC milling machine settings based on the adjusted parameters.

This forms a closed-loop control system that ensures the achievement of quality objectives.

Data Acquisition and Prediction Module

The Data Acquisition and Prediction Module employs multiple high-precision sensors to monitor key parameters of the CNC milling process in real time.

These parameters include cutting force, vibration, temperature, spindle speed, and feed rate.

Integrated electronic piezoelectric sensors capture vibration signals at a sampling rate of 10 kHz, enabling detection of minute variations during machining.

Infrared temperature sensors continuously monitor tool and workpiece temperatures at a sampling frequency of 1 Hz.

The industrial IoT gateway transmits raw data in real time to the central processing system.

During data processing, the system first reduces noise and normalizes the data, then extracts time-domain and frequency-domain features.

Parameter Adaptive Adjustment Mechanism

The parameter adaptive adjustment mechanism performs multi-objective optimization using particle swarm optimization or genetic algorithms.

This process integrates roughness errors and dimensional errors output by the model prediction module with target values.

The optimization objective is to minimize quality deviation while maintaining process stability. The system defines the objective function as:

-300x35.jpg)

In the equation: f(x) represents the objective function corresponding to the process parameter combination vector x;

α and β are weighting coefficients adjusted according to practical application requirements;

Rpred denotes the predicted roughness; Rtarget denotes the target roughness value;

Epred denotes the predicted dimensional error; Etarget denotes the target dimensional error value.

Parameter adjustment includes cutting speed, feed rate, and tool radius.

Operators typically set the cutting speed to 100–250 m·min⁻¹, the feed rate to 0.1–0.5 mm·r⁻¹, and adjust the tool radius according to the specific workpiece during machining.

The system uses the particle swarm optimization algorithm to perform a global search across multiple machining objectives.

This approach avoids local optima and helps achieve optimal machining results.

In practical applications, experiments determine α and β.

They are typically set to 1:1 initially and adjusted based on actual machining requirements and process parameters.

Quality Modeling and Optimization Algorithms

Before feature extraction, raw machining data must be organized and structured to support accurate modeling.

Quality modeling involves establishing relationships between process parameters (such as cutting speed, feed rate, and tool radius) and output indicators (surface roughness, dimensional errors, geometric deviations).

Optimization algorithms, including particle swarm optimization and genetic algorithms, are then applied to determine the best combination of parameters for minimizing quality deviations while maintaining process stability.

Data Preprocessing and Feature Engineering

After data acquisition, noise removal is performed first using wavelet transforms or median filtering to eliminate high-frequency noise, ensuring data smoothness and stability.

Next, data normalization is conducted using the Z-score normalization method, with the specific formula being:

-1.jpg)

In the formula: z represents the normalized value; x denotes the raw data; μ is the mean; σ is the standard deviation.

Normalization ensures features are on the same scale, preventing certain features from exerting excessive influence on the model.

Finally, feature construction is performed, primarily extracting key features from both the time domain and frequency domain.

In the time domain, extracted features include mean, standard deviation, and kurtosis.

Frequency domain features utilize the Fast Fourier Transform (FFT) to extract power spectral density, capturing the signal’s frequency components.

Principal Component Analysis (PCA) is applied for dimensionality reduction, eliminating redundant features, lowering computational complexity, and ensuring the validity and diversity of data characteristics.

The processed features serve as input data for subsequent quality prediction model training.

Prediction Model Design

The eXtreme Gradient Boosting (XGBoost) model is selected as the primary prediction model.

It demonstrates high efficiency and accuracy when handling large-scale datasets, excels particularly in addressing nonlinear problems, and effectively manages high-dimensional data.

The XGBoost model employs a gradient boosting tree algorithm, progressively enhancing prediction accuracy by integrating multiple weak models.

It is well-suited for processing complex data featuring diverse feature interactions.

The model inputs preprocessed feature data, primarily comprising time-domain and frequency-domain features.

These features effectively capture key factors influencing quality variations during processing.

Time-domain features reflect the fundamental statistical characteristics of signals.

Frequency-domain features reveal frequency-based changes during processing, particularly under factors like vibration and thermal deformation.

Together, these features provide valuable insights for quality prediction.

Data undergoes rigorous preprocessing before model input, including steps like noise removal and normalization.

This ensures features are processed on a consistent scale, preventing interference from differing feature scales in model results.

Cross-validation divides the dataset into training and validation sets, ensuring robustness in model training.

Feature selection is optimized to guarantee the validity of model input data.

Parameter Back-Calculation and Optimization Strategy

Based on the discrepancy between model prediction errors and target errors, a back-calculation method is employed to derive suitable cutting parameter combinations.

This process involves not only a strategy for parameter back-derivation from errors but also consideration of the interactive relationships among process parameters.

Building upon this foundation, global searches are conducted using particle swarm optimization algorithms or genetic algorithms to ensure optimal solutions are identified.

Unlike traditional local optimization methods, these global optimization algorithms prevent getting stuck in local optima and seek the most balanced parameter combination across multiple objectives.

For multi-objective optimization, the system adjusts the weight of each objective based on process requirements.

For instance, when quality demands are high, the weight of the roughness objective is increased, with other parameters adjusted accordingly.

In practical applications, the optimization algorithm continuously feeds back and adjusts parameters to ensure each machining operation achieves optimal performance under varying environmental conditions.

Model Training and Evaluation

The dataset was split into training and validation sets at a 7:3 ratio. Model performance was evaluated using K-fold cross-validation (K=5).

Input features for the XGBoost model included both time-domain and frequency-domain features, which required normalization.

Hyperparameters were optimized via grid search. Under optimal conditions, the tree depth was 6, learning rate was 0.1, subsampling ratio was 0.8, and L2 regularization was applied to prevent overfitting.

On the test set, the model achieved a root mean square error (RMSE) of 0.18 μm for roughness prediction with a coefficient of determination R2of 0.94.

For dimensional error prediction, the RMSE was 0.03 mm with an R² of 0.92, demonstrating excellent predictive accuracy and generalization capability.

This model is suitable for quality control in actual machining processes, exhibiting high stability and accuracy.

System Implementation and Experimental Validation

To validate the proposed system, a series of experiments were designed to test its performance under real machining conditions.

These experiments focus on evaluating the effectiveness of real-time data monitoring, predictive modeling, and adaptive parameter adjustment in improving surface quality and dimensional accuracy.

Both aluminum and stainless steel workpieces were selected to assess the system’s adaptability across different materials, cutting conditions, and machining targets.

Experimental Platform and System Deployment

The experimental platform utilizes a HAAS VF-4 CNC milling machine equipped with a Fanuc 0i-MF control system for machining experiments.

The platform incorporates high-precision sensors, including vibration sensors, infrared temperature sensors, and cutting force sensors.

Collected machining data is transmitted in real-time to the data processing system via an IoT gateway.

Data acquisition is set at a frequency of 10 kHz to enable detailed monitoring of the machining process.

The system deployment comprises a data acquisition module, a data processing and model prediction module, and a feedback control module.

The data acquisition module collects raw data in real time from the sensors and transmits it via industrial Ethernet to the central control system.

The processing system incorporates edge computing nodes that perform preliminary data cleaning and feature extraction to ensure data timeliness and accuracy.

The prediction module employs an XGBoost model to forecast machining quality in real time based on input features.

This prediction then adjusts CNC system parameters through the feedback control module.

Processing Quality Target Verification Experiment

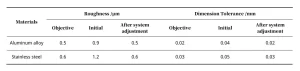

To validate the system’s performance in actual machining, different quality targets—specifically surface roughness and dimensional error—were designed.

Adjustments were made for each target to test the system’s adaptive regulation capability.

The experiment selected two materials—aluminum alloy (grade 6061) and stainless steel (grade 304)—for high-precision machining.

For each machining target, the system dynamically adjusted cutting speed, feed rate, and tool radius based on real-time data to ensure final quality met specifications.

Results are shown in Table 1. The system successfully reduced aluminum alloy roughness from 0.9 μm to 0.5 μm and dimensional error from 0.04 mm to 0.02 mm, demonstrating significant improvement.

For stainless steel, surface roughness decreased from 1.2 μm to 0.6 μm, while dimensional error reduced from 0.05 mm to 0.03 mm.

These data demonstrate the system’s adaptability across different materials and its precise parameter adjustment capabilities.

Effect Analysis and Comparison Results

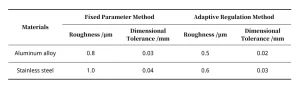

To evaluate system performance, experiments compared machining outcomes using traditional fixed-parameter methods versus big data-driven adaptive adjustment methods.

Tests were conducted on both aluminum alloy and stainless steel materials, measuring surface roughness and dimensional errors while comparing the two approaches’ performance.

Results are shown in Table 2. In aluminum alloy machining, the adaptive adjustment method reduced surface roughness from 0.8 μm to 0.5 μm and dimensional error from 0.03 mm to 0.02 mm.

For stainless steel machining, surface roughness decreased from 1.0 μm to 0.6 μm, and dimensional error improved from 0.04 mm to 0.03 mm.

These improvements demonstrate the significant advantages of the adaptive adjustment method in enhancing machining quality and precision.

Conclusion

This study proposes a CNC milling machine quality control method based on big data analysis.

By integrating real-time data with adaptive adjustment mechanisms, it significantly enhances machining accuracy and stability.

Experimental validation demonstrates the system’s ability to effectively adjust parameters across diverse materials and machining environments, achieving predetermined quality targets.

Future research may further optimize the algorithm’s real-time responsiveness, expand the system’s adaptability under varied operating conditions, and explore more efficient multi-objective optimization strategies.

What are the main machining quality problems in CNC milling, and why are they difficult to control?

CNC milling quality problems mainly include surface roughness, dimensional errors, and geometric deviations. These issues arise from factors such as cutting force fluctuations, thermal deformation, vibration, and machine tool accuracy. Traditional experience-based quality control methods lack flexibility and struggle to adapt to complex, dynamic machining conditions, leading to unstable quality and tolerance failures.

How does big data improve quality control in CNC milling machines?

Big data enables real-time monitoring and analysis of machining parameters such as cutting force, vibration, temperature, feed rate, and spindle speed. By processing large volumes of sensor data and applying machine learning models, the system can predict machining quality in advance and dynamically adjust parameters, achieving more stable, precise, and intelligent quality control.

What is the architecture of a big data–driven CNC milling quality control system?

The proposed system adopts a modular, closed-loop architecture consisting of data acquisition, data processing, quality prediction, parameter optimization, and feedback control modules. Real-time sensor data is processed and analyzed to predict quality deviations, while optimized parameters are automatically fed back to the CNC machine, ensuring continuous quality improvement during machining.

Which sensors and data features are critical for machining quality prediction?

High-precision sensors such as vibration sensors, infrared temperature sensors, and cutting force sensors are essential for capturing real-time machining conditions. Key data features include time-domain statistics (mean, standard deviation, kurtosis) and frequency-domain features extracted using FFT. Feature dimensionality is further optimized using PCA to enhance prediction accuracy and computational efficiency.

Why is XGBoost suitable for CNC machining quality prediction?

XGBoost is well-suited for CNC machining quality prediction because it efficiently handles nonlinear relationships and high-dimensional data. By integrating multiple weak learners through gradient boosting, it achieves high prediction accuracy and robustness. Experimental results show that XGBoost delivers excellent performance in predicting surface roughness and dimensional errors under complex machining conditions.

What advantages does adaptive parameter optimization offer over traditional fixed-parameter machining?

Adaptive parameter optimization dynamically adjusts cutting speed, feed rate, and tool radius based on real-time quality predictions and optimization algorithms such as particle swarm optimization or genetic algorithms. Compared with traditional fixed-parameter methods, it significantly reduces surface roughness and dimensional errors, improves process stability, and enhances machining quality across different materials and operating environments.